Blackbaud recently added the SKY Query API for Raiser’s Edge and Financial Edge. At first I was not really sure how this would be of benefit to our products. We have worked with Lists in Raiser’s Edge and on the database view we have generated static queries of records that have been processed. I never thought that we had a need for adding criteria to queries.

Now that I have seen the new Query API, I have been inspired.

How have we got around the lack of a query API so far?

In Chimpegration we push data from Raiser’s Edge to Mailchimp. In order to decide which records to push, we let the user select an NXT list that has previously been created. There are some issues with this.

- Firstly, the list selection criteria is limited. The user cannot, for example, specify that a constituent with no email address or a blank email address should be ignored.

- The list functionality does not allow you to choose specific output fields. We offer a limited range of fields that we think that the user may want to export.

- The lists are static. If you want to update them, you can, but this prevents data being pushed to Mailchimp according to a schedule. (There are some workaround involving Queue but these are awkward).

How does the Query API solve this?

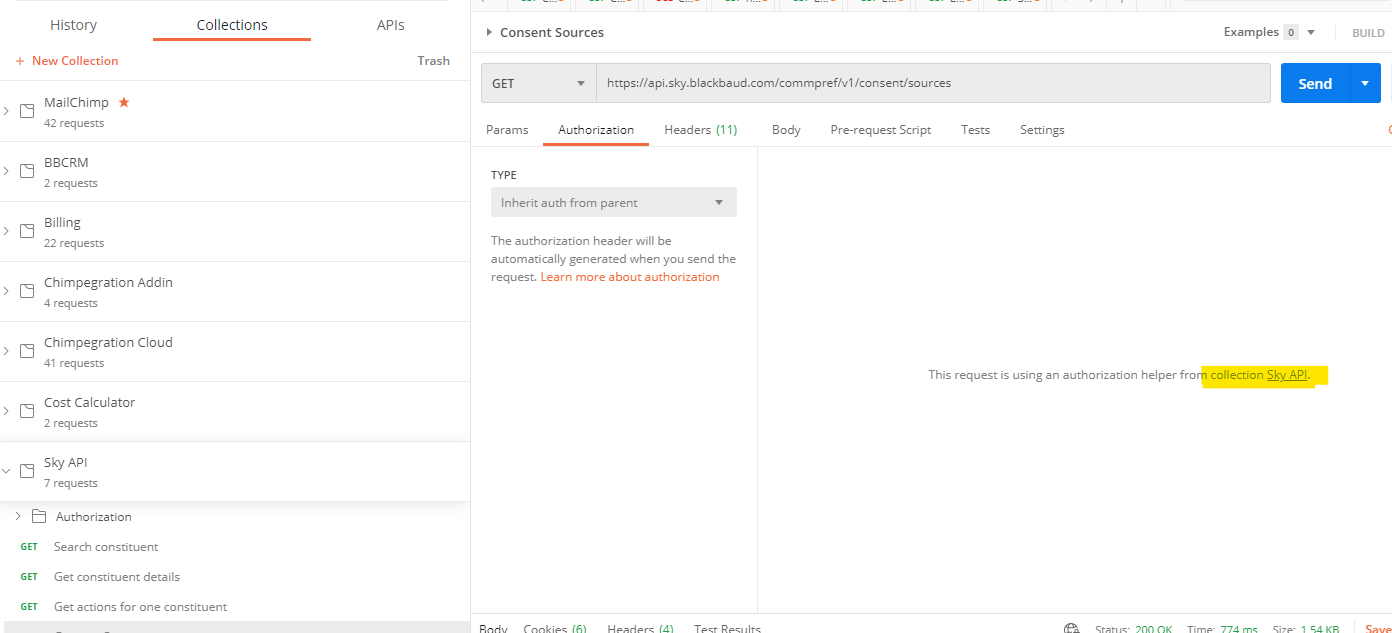

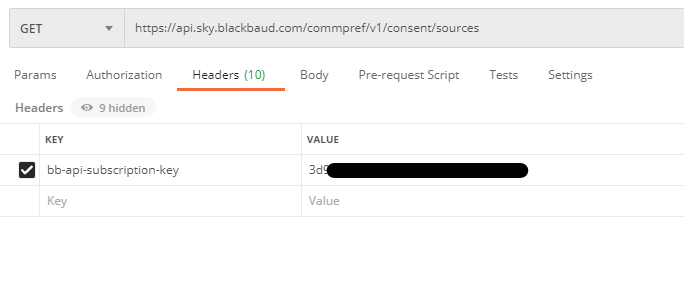

The query API allows you to programmatically list all queries and to load one of them in particular. You can then run the query and fetch the results. This makes it fully dynamic. Scheduled data exports to Mailchimp would be up to date with the latest information.

The user can choose the output data. Whereas previously they have been limited to the areas we offer the user, now they can choose any value available to them in a query. Most organisations won’t want to push a membership attribute or a gift notepad to Mailchimp but there is bound to be one out there that wants to do something like that or some other option that we had not considered. With the full range of output fields they are no longer restricted to what we offer them.

The same goes for criteria. Previously the user was restricted to the fields available in lists. Now the whole range of query fields can be a part of the criteria. If an organisation only wants to export constituents attending a specific event with a large t-shirt size, now they can!

What else can we do with Query API?

In Chimpegration Classic and in Importacular we look up constituents with criteria sets. It has been much simpler to look up constituents with a wide range of criteria. There is some scope for this based on the constituent list API endpoint. However, the Query API really gives this some muscle.

At first I thought that, even if we could do it, it would not be practical to create a query each time the user wanted to use criteria to search. We would end up creating a lot of queries saved in Raiser’s Edge. It is not certain that the user would have rights to delete a query. It just felt wrong.

However, the Query API includes the ability to generate a query on the fly. When including the filter, output fields and sort values in the json payload, the query can be run without explicitly saving it to the organisation’s environment. This is a real game changer.

The SKY API documentation does suggest that this should not be used instead of the constituent list and constituent search endpoints as they are optimised for search. However being able to search on more obscure areas of Raiser’s Edge certainly adds a lot of power to look up records that was previously missing.

When are we releasing the new Chimpegration functionality?

We are actively developing this functionality. However the Query API is still in preview so it is uncertain when this will be released. We may release it also with the caveat that our functionality is in preview and may break at any time (due to changes in the Query API). This is a matter of a few weeks though so watch this space for a demo!